TCG & Web Scraping, pt1: market data

"Building an automated web scraper to gather TCG market data"

1. The Genesis

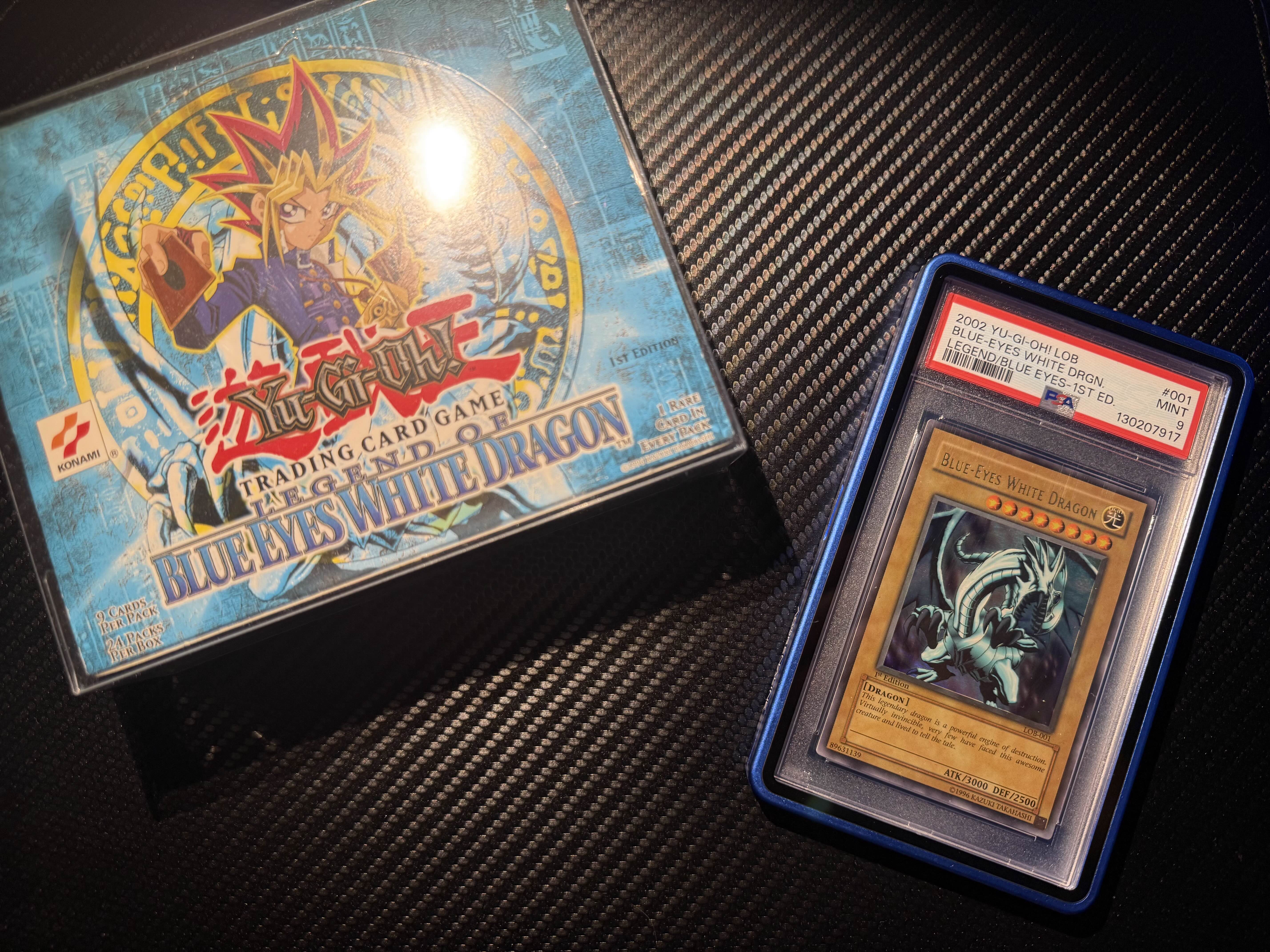

It’s no secret that I’ve been into trading cards for a while. Perhaps it's just a heavy dose of nostalgia for my youth? Either way, it eventually led me to collecting the items I could only dream about as a kid. That hobby eventually brought me to a YouTube video by the influencer Strictly Sealed.

I joined his Discord and we chatted casually. Eventually, the conversation turned to tech, and I mentioned that automating his market data collection would be a game-changer. It was a perk he offered through his Patreon, but at the time, the process was entirely manual. It was tedious and, to say the least, a massive pain. That’s when I took my first dive into the world of web scraping, moving beyond my background in raw Python.

The task was clear: for each major selling platform, we needed a script capable of pulling screenshots of sales to be posted directly to Discord.

2. The Biggest of Them All: eBay

Who hasn’t used eBay? It’s a great platform (if you can ignore the seller fees). You can run auctions, buy at a fixed price, or even send offers. The biggest advantage of eBay is that it allows you to query through sold items and sort by sale date—though the downside is it doesn’t always confirm if an item was actually paid for. The platform also lacks heavy anti-bot restrictions; using undetected-chromedriver is usually more than enough.

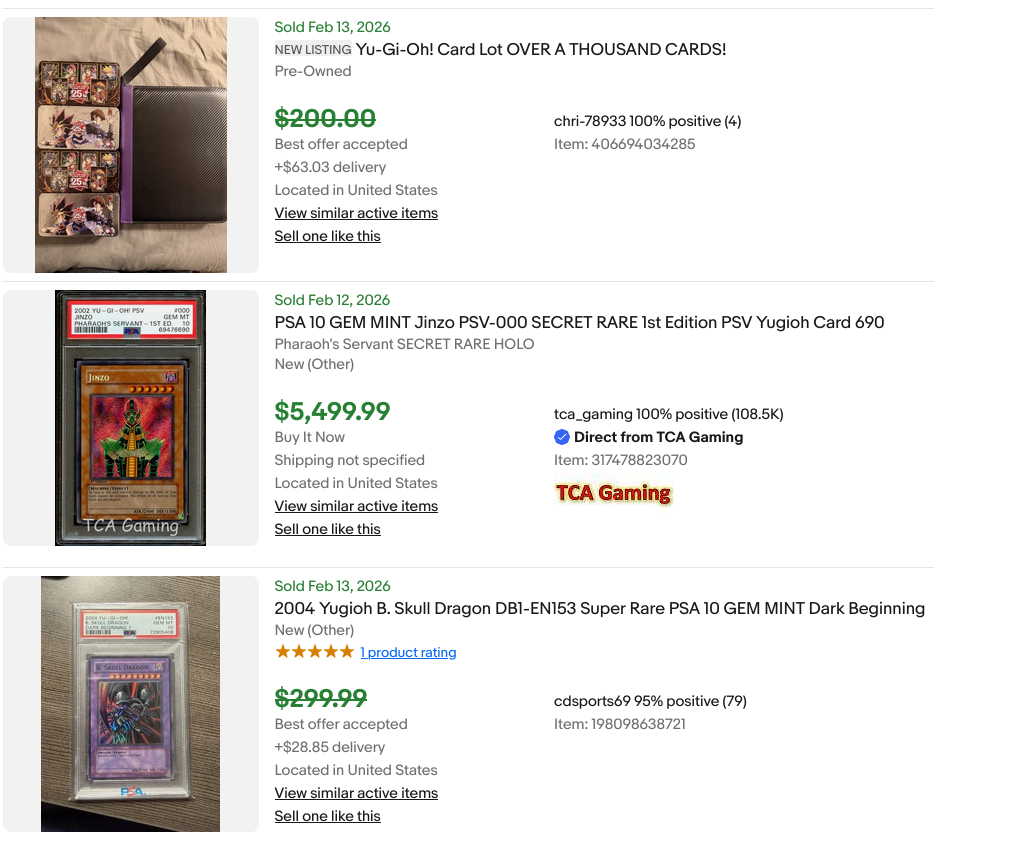

The output of a search through "completed sales" on eBay.

From there, the job is straightforward. Automating the decision of "which sale is relevant" is still a project for the future. For now, the user browses the results, picks the relevant sales, and pastes the URLs into a text file. The bot then verifies the date is current—funnily enough, eBay occasionally pushes year-old sales to the top of the "most recent" list.

Then comes another quirk: clicking a sale should land you on the item page to be screenshotted. However, sometimes—and I suspect this is linked to payment status—you land on a completely irrelevant page. The bot has to cross-reference the URL and item title to ensure it's on the right page before taking the shot. But what happens if an item sold for a "Best Offer" or the page simply isn't accessible?

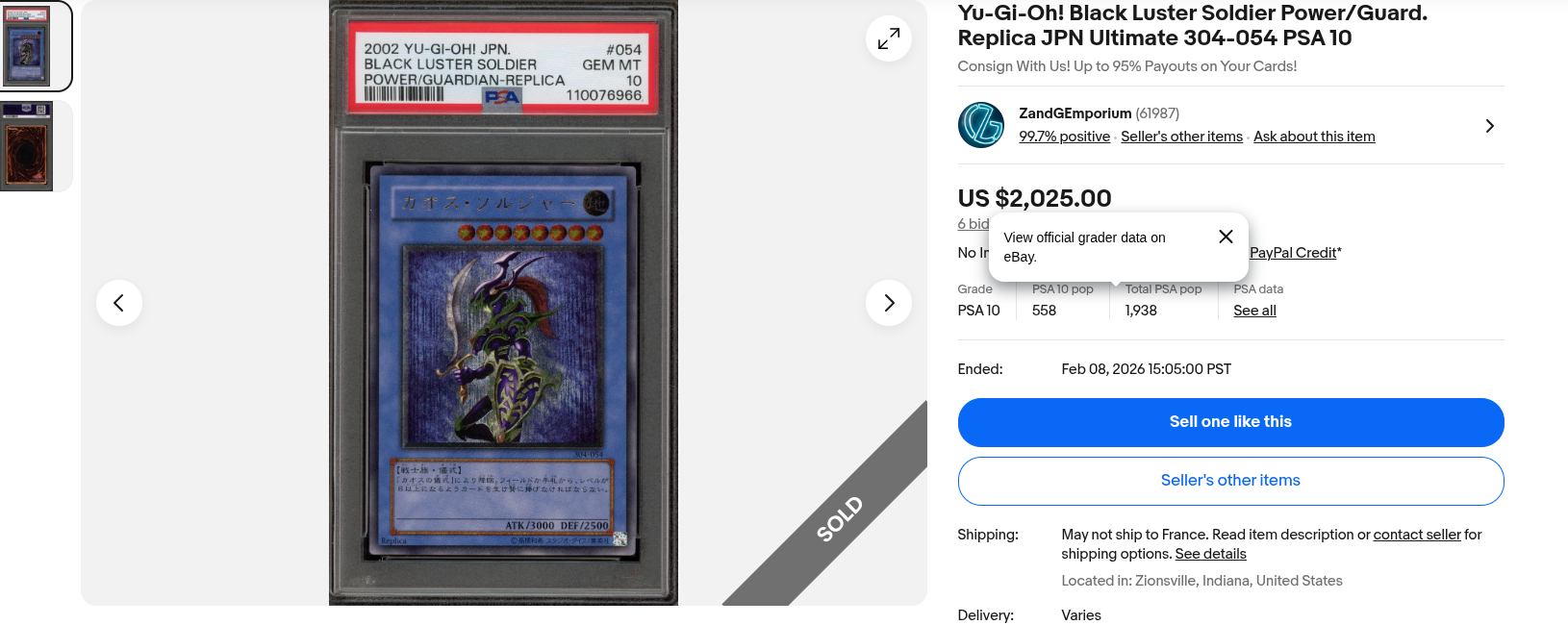

The output the bot produces in a "good scenario."

Enter our best friend: 130points. This site is eBay search on steroids. It reveals the actual "Best Offer" price and provides clean screenshots even when the original eBay page is dead. For every eBay item parsed, we double-check it on 130points if:

- • The eBay item page wasn't reachable.

- • The item sold for a Best Offer, and the final price differs from the listed one.

The screenshot produced if the eBay picture couldn't be captured.

Finally, we crop the images. If the eBay item was accessible but an offer was accepted, we send the eBay photo along with a tight crop of the 130points price data for clarity.

3. Other Platforms

Don't worry, I won't ramble much longer. eBay was the hardest part due to that required double-verification. Other platforms we scraped included Fanatics Collect, alt.xyz, Mercari, Yahoo Japan Auctions (to track Japanese data), and Heritage Auctions (for high-end items). While the process was similar, one detail was crucial: file naming. We wanted every file named exactly after the item being viewed.

This was easy for eBay and Heritage, but for Mercari and Yahoo Japan, I had to handle Japanese text. I built a self-populating translation database with a helper script. For each Japanese listing, it parses the name character-by-character against a Yu-Gi-Oh database to find a mapping, then uses Google Translate for any remaining parts.

4. The Real Database

Looking to the future, I’ve been working on a system to capture every element of a sale: date, price, title, platform, format (auction vs. offer), etc., and mapping them to a data class.

Using these objects, it should be possible to build a massive SQL database. This would allow users to value items instantly using clean, structured data. The final missing piece before I build a front-end is a better way to search for specific card rarities—Yu-Gi-Oh is notoriously difficult when it comes to variations. Relying solely on listing titles just isn't accurate enough yet.

5. Conclusion

What started as a casual conversation in a Discord server turned into a deep dive into the world of web automation. By bridging the gap between raw Python scripts and specialized platforms like eBay and 130points, we moved away from the "tedious pain" of manual entry toward a streamlined, data-driven workflow.

Building this wasn't just about the code—it was about understanding the specific friction points of the TCG market and solving them. Whether it was handling multi-language translation for Japanese auctions or bypassing anti-bot measures, every challenge was a step toward a more transparent market for the community.

6. A Word from Strictly Sealed

"Exqd1a did an unbelievable job optimizing the scraping/integration of the pop report data. But the more impressive work was the accuracy and potency of the sales data accumulation. He was not only able to create a protocol that rips sales data that is accurate and timely, he was able to transcend that by including item categories and products that I personally had never thought to include in the sales data accumulation in the first place. Amazing work."